Documentation Index

Fetch the complete documentation index at: https://checklyhq.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

If you’re using Playwright for end-to-end testing, you should check out Playwright Check Suites and start testing in production.

- In testing and monitoring, asserting against the state of one or more elements on a page.

- In general, gathering data for a variety of different purposes.

You can use Playwright as a library to scrape data from web pages, without also using Playwright for testing.

Scraping element attributes & properties

Below is an example running against our test site, getting and printing out the href attribute of the first a element on the homepage.

That just happens to be our logo, which links right back to our homepage, and therefore will have an href value equal to the URL we navigate to using page.goto():

// Example code for getting href attribute

const { chromium } = require('playwright');

(async () => {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto('https://danube-web.shop/');

const href = await page.getAttribute('a', 'href');

console.log('First link href:', href);

await browser.close();

})();

href value of the first a element of our homepage:

// Example code for getting href using element handle

const { chromium } = require('playwright');

(async () => {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto('https://danube-web.shop/');

const element = await page.$('a');

const href = await element.getAttribute('href');

console.log('First link href:', href);

await browser.close();

})();

The innerText property is often used in tests to assert that some element on the page contains the expected text.

Scraping lists of elements

Scraping element lists is just as easy. For example, let’s grab the innerText of each product category shown on the homepage:

// Example code for getting text values from multiple elements

const { chromium } = require('playwright');

(async () => {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto('https://danube-web.shop/');

const categories = await page.$$eval('.category-link', elements =>

elements.map(element => element.innerText)

);

console.log('Categories:', categories);

await browser.close();

})();

Scraping images

Scraping images from a page is also possible. For example, we can easily get the logo of our test website and save it as a file:

// Example code for scraping and saving images

const { chromium } = require('playwright');

const axios = require('axios');

const fs = require('fs');

(async () => {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto('https://danube-web.shop/');

const imageSrc = await page.getAttribute('img[alt="logo"]', 'src');

console.log('Image source:', imageSrc);

const response = await axios.get(imageSrc, { responseType: 'stream' });

const writer = fs.createWriteStream('logo.png');

response.data.pipe(writer);

await browser.close();

})();

GET request against the source URL of the image. The response body will contain the image itself, which can be written to a file using fs.

Generating JSON from scraping

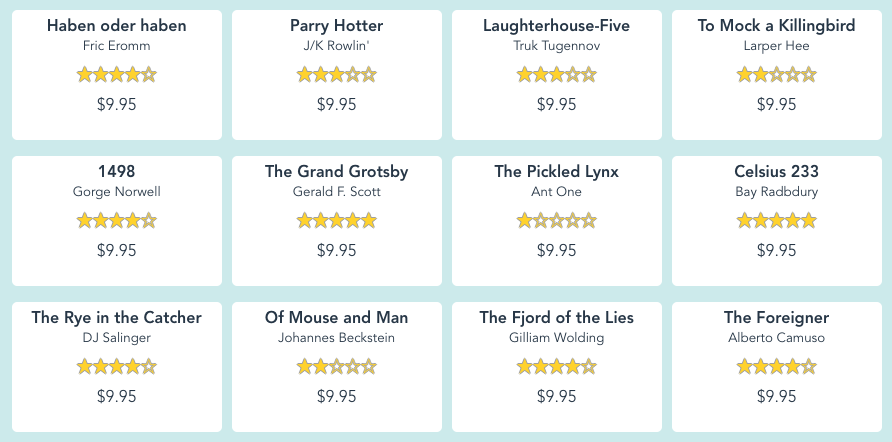

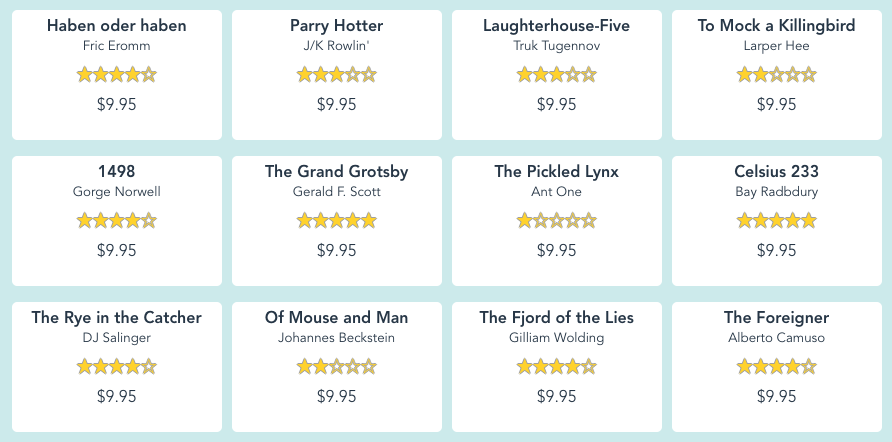

Once we start scraping more information, we might want to have it stored in a standard format for later use. Let’s gather the title, author and price from each book that appears on the home page of our test site:

The code for that could look like this:

The code for that could look like this:

// Example code for scraping data and generating JSON

const { chromium } = require('playwright');

const fs = require('fs');

(async () => {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto('https://danube-web.shop/');

const books = await page.$$eval('.book-item', elements =>

elements.map(element => ({

title: element.querySelector('.book-title').innerText,

author: element.querySelector('.book-author').innerText,

price: element.querySelector('.book-price').innerText

}))

);

fs.writeFileSync('books.json', JSON.stringify(books, null, 2));

console.log('Books data saved to books.json');

await browser.close();

})();

books.json file will look like the following:

[

{ "title": "Haben oder haben",

"author": "Fric Eromm",

"price": "$9.95"

},

{

"title": "Parry Hotter",

"author": "J/K Rowlin'",

"price": "$9.95"

},

{

"title": "Laughterhouse-Five",

"author": "Truk Tugennov",

"price": "$9.95"

},

{

"title": "To Mock a Killingbird",

"author": "Larper Hee",

"price": "$9.95"

},

...

]

Further reading

- Playwright’s official API reference on the topic

- An E2E example test asserting against an element’s

innerText

Bugs don’t stop at CI/CD. Why would Playwright?

Sign up and start using Playwright for end-to-end monitoring with Checkly.