When we decided to build Checkly's browser checks, we chose to do so with Puppeteer, an open-source headless browser automation tool, later adding Playwright, too. We wanted to support users with synthetic monitoring and testing to let them know whether their websites worked as expected at any given moment. Speed was a primary concern in our case.

Yet, determining which automation tool is generally faster is far from simple. Therefore we decided to run our own benchmarks to see how newcomers Puppeteer and Playwright measured against the veteran WebDriverIO (using Selenium and the DevTools automation protocols).

Among the results of our benchmark were also some unexpected findings, like Puppeteer being significantly faster on shorter scripts and WebDriverIO showing larger than expected variability in the longer scenarios. Read below to know more about the results and how we obtained them.

Why compare these automation tools?

A benchmark including Puppeteer/Playwright and Selenium is pretty much an apples-and-oranges comparison: these tools have significantly different scopes, and anyone evaluating them should be aware of their differences before speed is considered.

Still, most of us having worked with Selenium for many years, we were keen to understand if these newer tools were indeed any faster.

It is also important to note that WebDriverIO is a higher-level framework with plenty of useful features, which can drive automation on multiple browsers using different tools under the hood.

Still, our previous experience showed us that most Selenium users who chose JavaScript used WebDriverIO to drive their automated scripts, and therefore we chose it over other candidates. We were also quite interested in testing out the new DevTools mode.

Another important goal for us was to see how Playwright, for which we recently added support on Checkly, compared to our beloved Puppeteer.

Methodology, or how we ran the benchmark

Feel free to skip this section in case you want to get straight to the results. We still recommend you take the time to go through it, so that you can better understand exactly what the results mean.

General guidelines

A benchmark is useless if the tools being tested are tested under significantly different conditions. To avoid this, we put together and followed these guidelines:

- Resource parity: Every test was run sequentially on the same machine while it is "at rest", i.e. no heavy workloads were taking place in the background during the benchmark, potentially interfering with the measurements.

- Simple execution: Scripts were run as shown in the "Getting started" documentation of each tool, e.g. for Playwright:

node script.jsand with minimal added configuration. - Comparable scripts: We strived to minimize differences in the scripts that were used for the benchmark. Still, some instructions had to be added/removed/tweaked to achieve stable execution.

- Latest everything: We tested all the tools in their latest available version at the time of the publishing of this article.

- Same browser: All the scripts ran against headless Chromium.

See the below section for additional details on all points.

Technical setup

For each benchmark, we gathered data from 1000 successful sequential executions of the same script.

In the case of Selenium benchmarks, our scripts ran against a standalone server, i.e. we did not start a new server from scratch for each run (even though we always used clean sessions), as some frameworks do. We made this choice to limit overhead on execution time.

We ran all tests on the latest-generation MacBook Pro 16" running macOS Catalina 10.15.7 (19H2) with the following specs:

Model Identifier: MacBookPro16,1

Processor Name: 6-Core Intel Core i7

Processor Speed: 2,6 GHz

Number of Processors: 1

Total Number of Cores: 6

L2 Cache (per Core): 256 KB

L3 Cache: 12 MB

Hyper-Threading Technology: Enabled

Memory: 16 GB

We used the following dependencies:

bench-wdio@1.0.0 /Users/ragog/repositories/benchmarks/scripts/wdio-selenium

├── @wdio/cli@6.9.1

├── @wdio/local-runner@6.9.1

├── @wdio/mocha-framework@6.8.0

├── @wdio/spec-reporter@6.8.1

├── @wdio/sync@6.10.0

├── chromedriver@87.0.0

└── selenium-standalone@6.22.1scripts@1.0.0 /Users/ragog/repositories/benchmarks/scripts

├── playwright@1.6.2

└── puppeteer@5.5.0

You can find the scripts we used, together with the individual results they produced, in the dedicated GitHub repository.

Measurements

Our results will show the following values, all calculated across 1000 runs:

- Mean execution time (in seconds)

- Standard deviation (in seconds): A measure of the variability in execution time.

- Coefficient of variation (CV): A unitless coefficient showing the variability of results in relation to the mean.

- P95 (95th-percentile measurement): The highest value left when the top 5% of a numerically sorted set of collected data is discarded. Interesting to understand what a non-extreme but still high data point could look like.

What we did not measure (yet):

- Reliability: Unreliable scripts quickly get useless, no matter how fast they execute.

- Parallelisation efficiency: Parallel execution is very important in the context of automation tools. In this case, though, we first wanted to understand the speed at which a single script could be executed.

- Speed in non-local environments: Like parallelisation, cloud execution is also an important topic which remains outside of the scope of this first article.

- Resource usage: The amount of memory and computing power needed can determine where and how you are able to run your scripts.

Stay tuned, as we might explore these topics in upcoming benchmarks.

The results

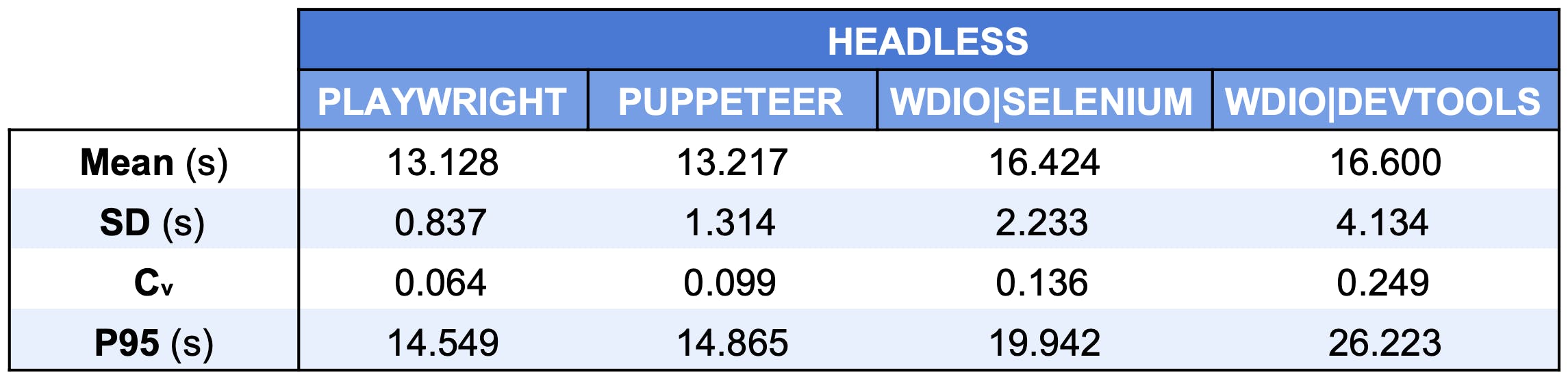

Below you can see the aggregate results for our benchmark. You can find the full data sets in our GitHub repository.

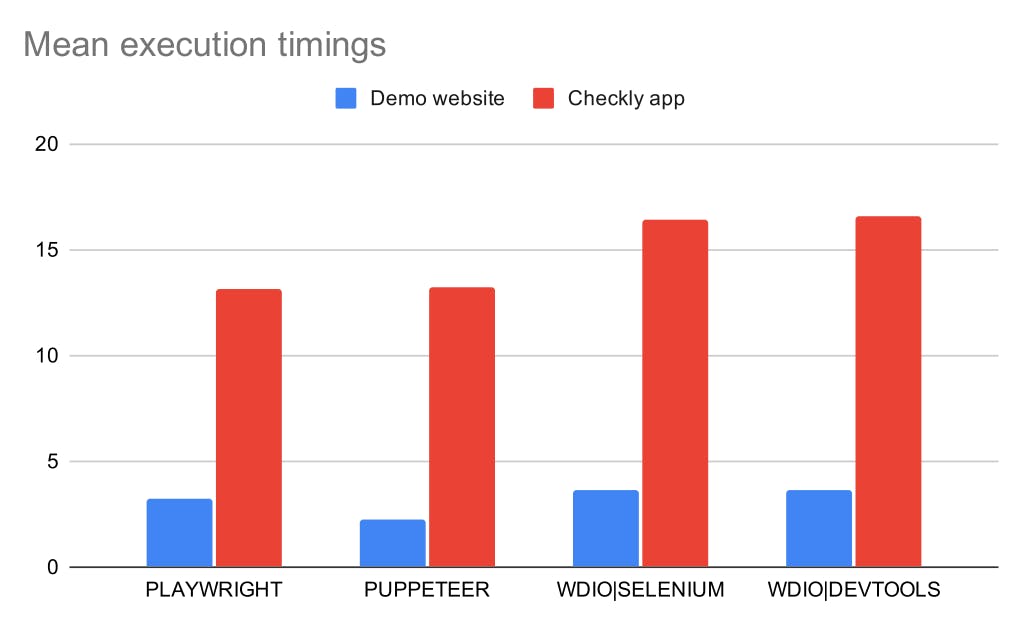

Running against a demo website

Our first benchmark ran against our demo website. Hosted on Heroku, this web page is built using Vue.js and has a tiny Express backend. In most cases, no data is actually fetched from the backend, and the frontend is instead leveraging client-side data storage.

In this first scenario, performing a quick login procedure, we expected an execution time of just a few seconds, great for highlighting potential differences in startup speed between the actual tools.

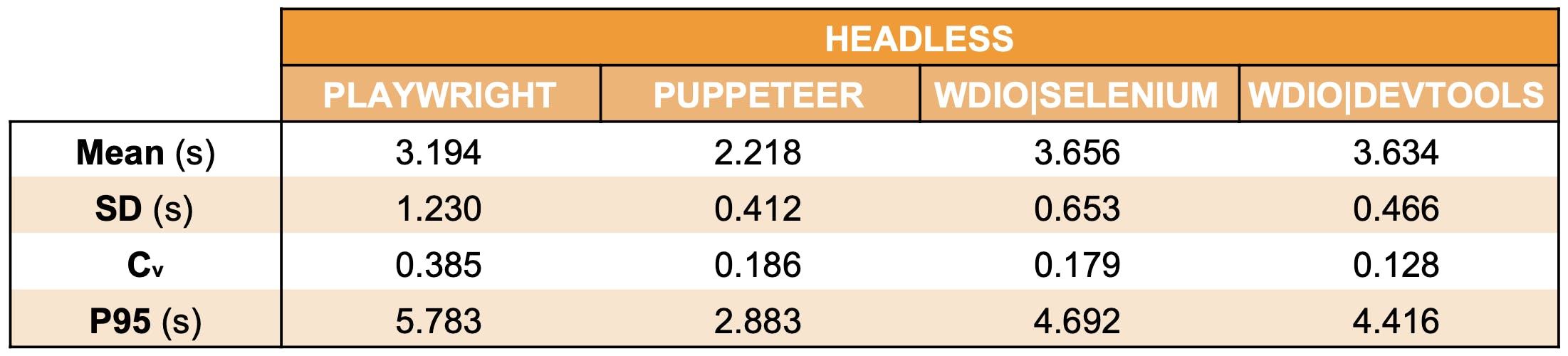

The aggregate results are as follows:

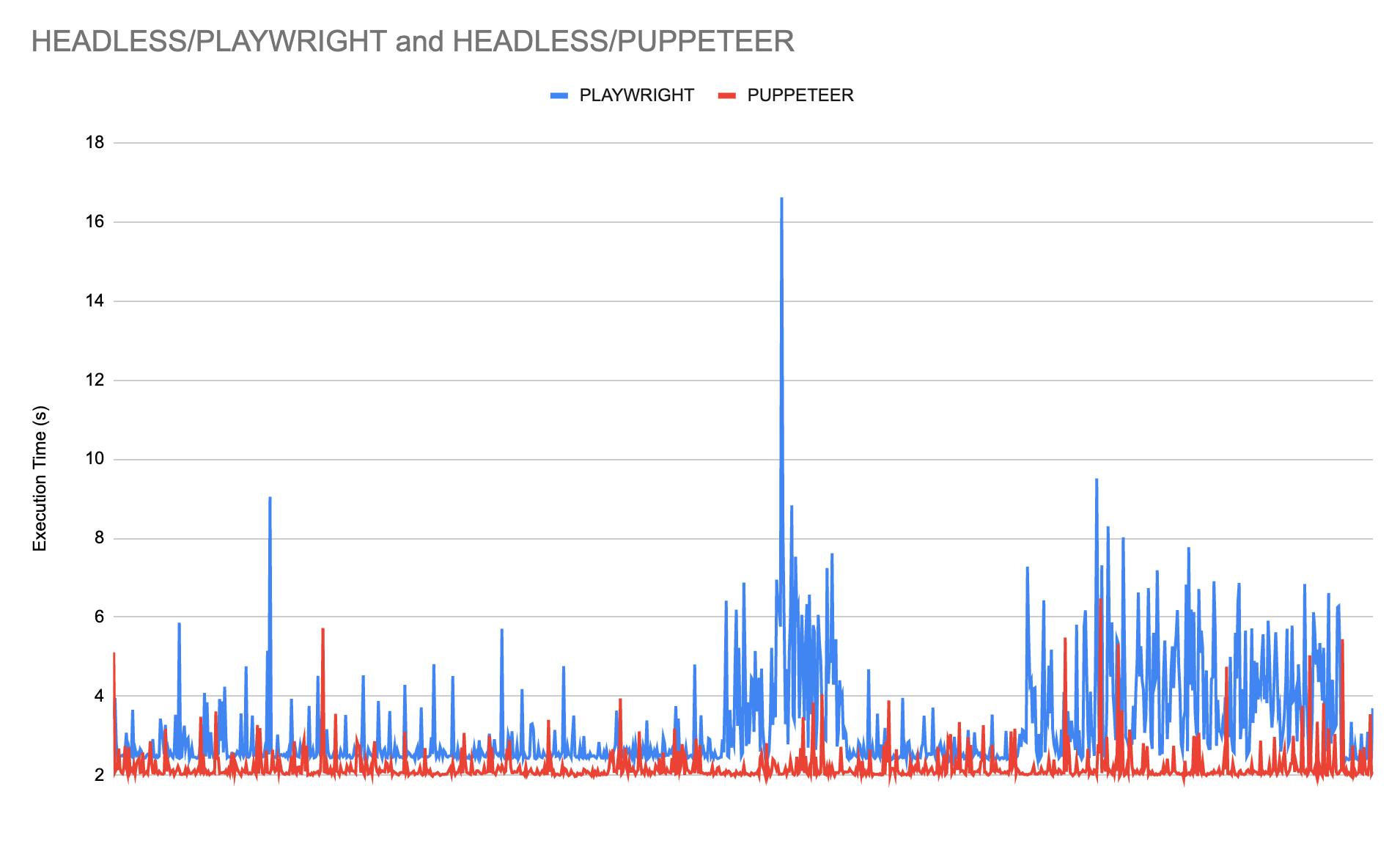

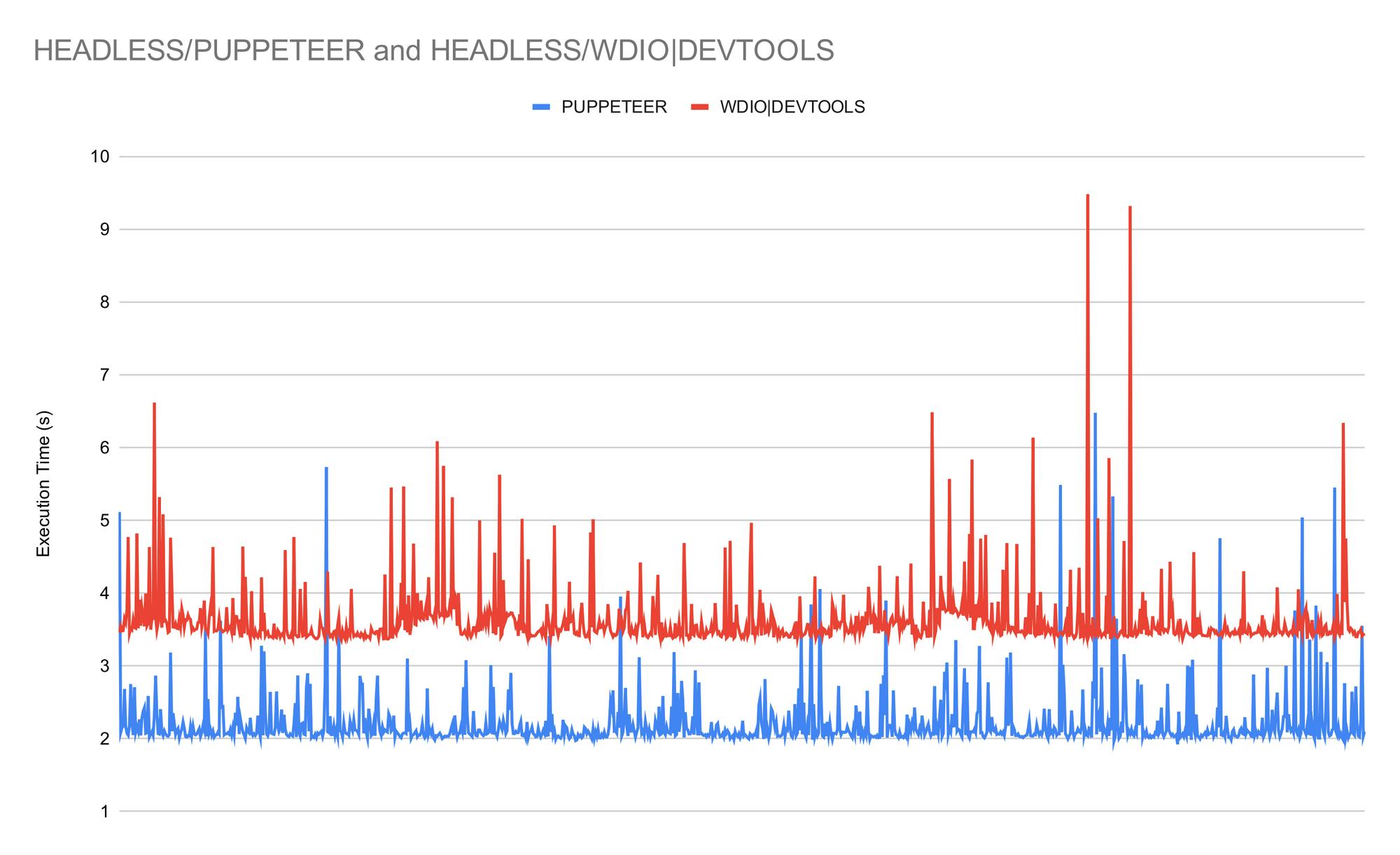

The first thing that catches one's attention is the large difference between the average execution time for Playwright and Puppeteer, with the latter being almost 30% faster and showing less variation in its performance. This left us wondering whether this was due to a higher startup time on Playwright's side. We parked this and similar question to avoid scope creep for this first benchmark.

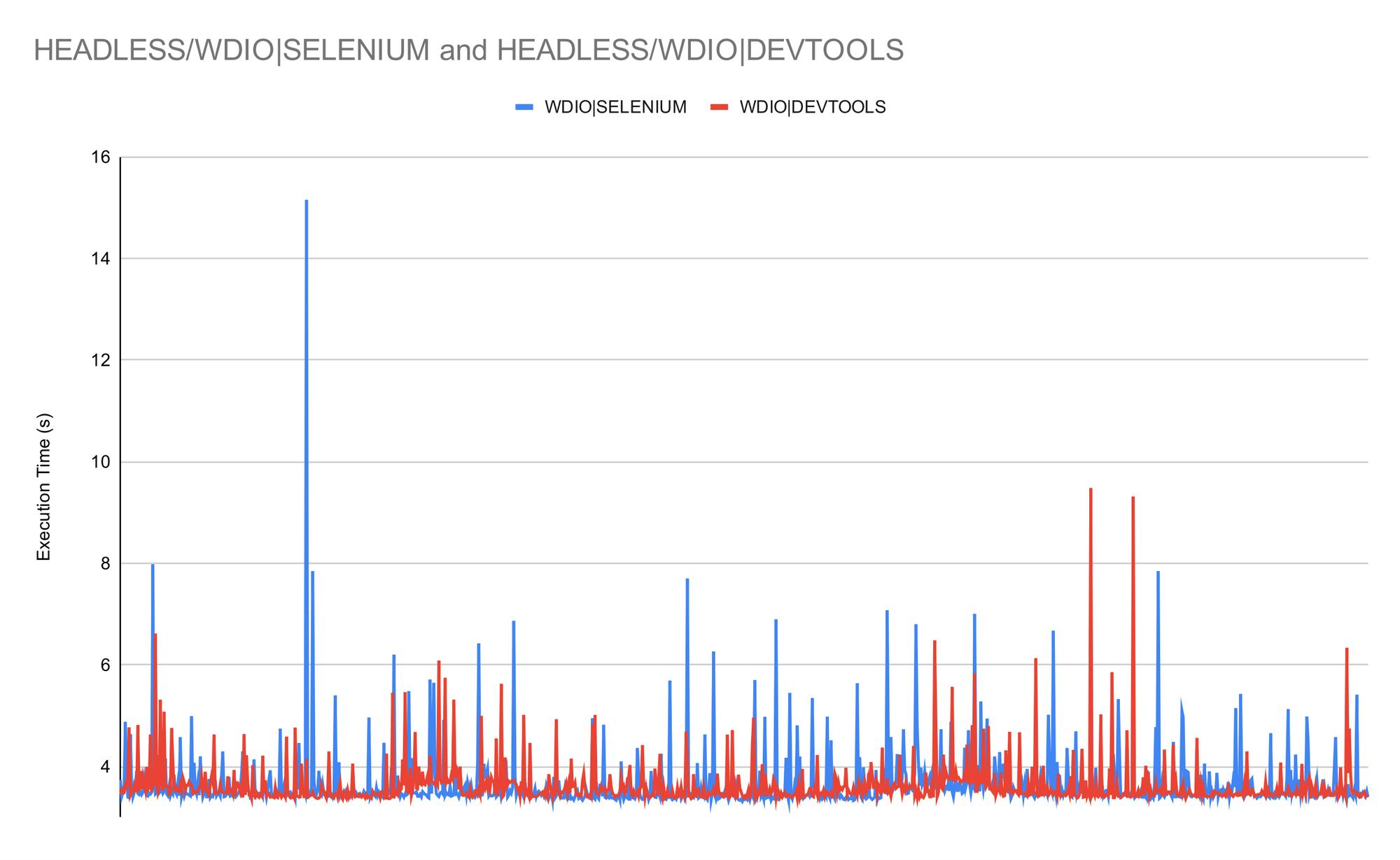

The second surprise was the lower overall variability shown in the WebDriverIO runs. Also interesting is just how close the results are: the chart shows the lines crossing each other continuously, as the automation protocol does not seem to make a sizeable difference in execution time in this scenario.

Less surprising is perhaps that running Puppeteer without any added higher-level framework helps us shave off a significant amount of execution time on this very short script.

Running against a real-world web application

Previous experience has taught us that the difference between a demo environment and the real world gets almost always underestimated. We were therefore very keen to have the benchmarks run against a production application. In this case we chose our own, which runs a Vue.js frontend and a backend which heavily leverages AWS.

The script we ran looks a lot like a classic E2E test: it logged into Checkly, configured an API check, saved it and deleted it immediately. We were looking forward to this scenario, but each of us had different expectations on what the numbers would look like.

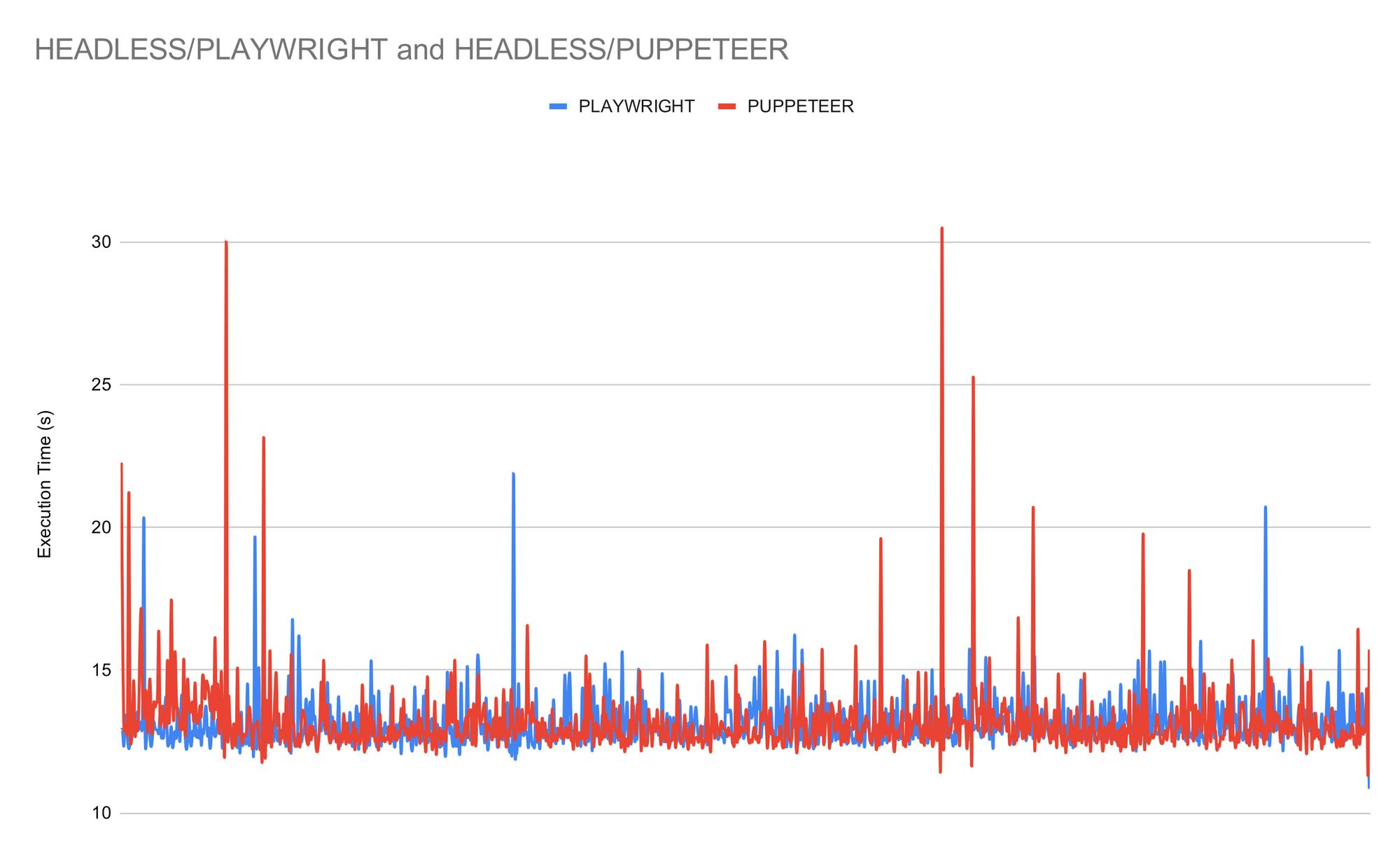

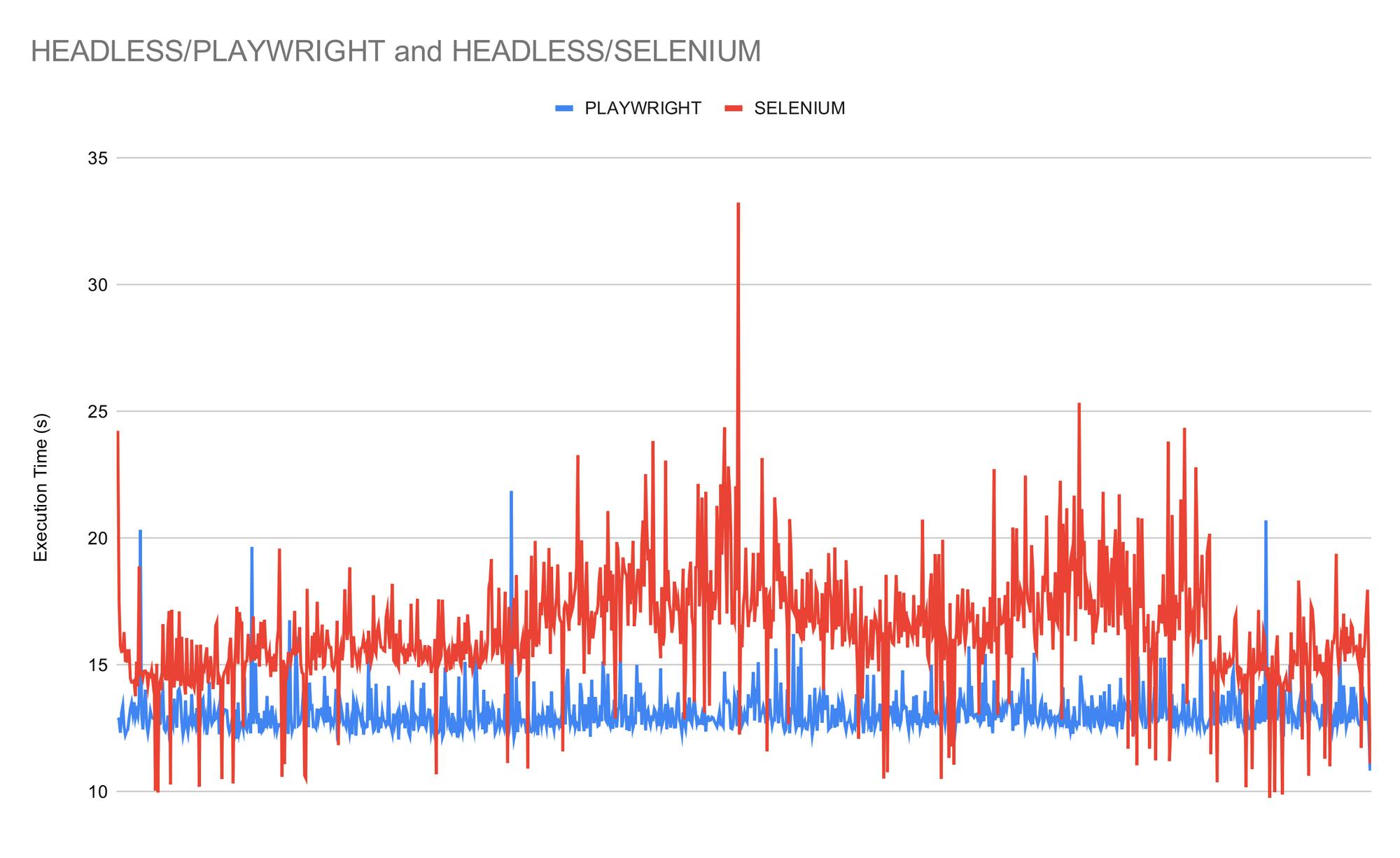

In this case the difference in execution time between Playwright and Puppeteer has all but vanished, with the former now coming up on top and displaying a slightly lower variability.

Proportionally, the difference between the newer tools and both flavours of WebDriverIO is also lower. It is worth noting that the latter two are now producing more variable results compared to the previous scenario, while Puppeteer and Playwright are now displaying smaller variations.

Interestingly enough, our original test for this scenario included injecting cookies into a brand new session to be able to skip the login procedure entirely. This approach was later abandoned as we encountered issues on the Selenium side, with the session becoming unresponsive after a certain number of cookies had been loaded. WebDriverIO handled this reliably, but the cookie injection step exploded the variability in execution time, sometimes seemingly hanging for longer than five seconds.

We can now step back and compare the execution times across scenarios:

Have doubts about the results? Run your own benchmark! You can use our benchmarking scripts shared above. Unconvinced about the setup? Feel free to submit a PR to help make this a better comparison.

Conclusion

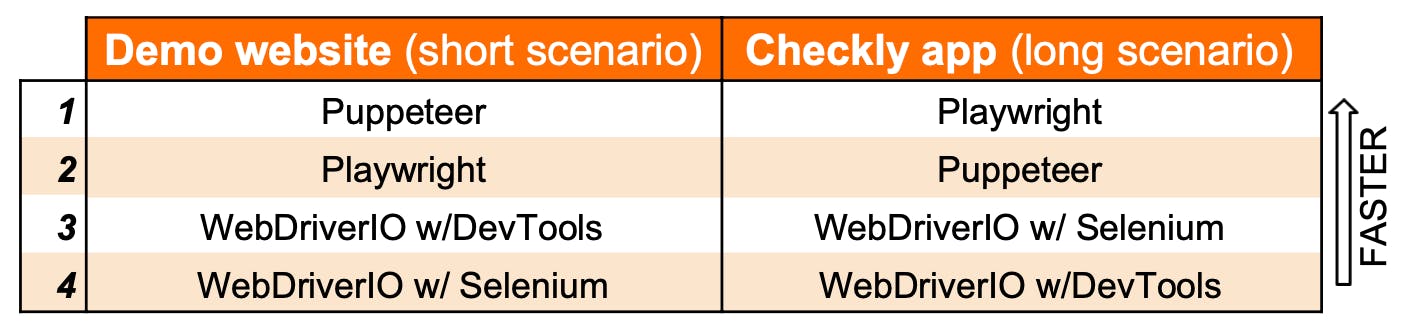

First off, let us rank the tools from fastest to slowest for both testing scenarios:

This first benchmark brought up some interesting findings:

- Even though Puppeteer and Playwright support similar APIs, Puppeteer seems to have a sizeable speed advantage on shorter scripts (close to 30% in our observations).

- Puppeteer and Playwright scripts show faster execution time (close to 20% in E2E scenarios) compared to the Selenium and DevTools WebDriverIO flavours.

- With WebDriverIO, WebDriver and DevTools automation protocols showed comparable execution times.

Takeaways

- When running lots of quicker scripts, if there is no need to run cross-browser, it might be worth to run Puppeteer to save time. On longer E2E scenarios, the difference seems to vanish.

- It pays off to consider whether one can run a more barebones setup, or if the convenience of WebDriverIO's added tooling is worth waiting longer to see your results.

- Fluctuations in execution time might not be a big deal in a benchmark, but in the real world they could pile up and slow down a build. Keep this in mind when choosing an automation tool.

- Looking at the progress on both sides, we wonder if the future will bring DevTools to the forefront, or if WebDriver will keep enjoying its central role in browser automation. We suggest keeping an eye on both technologies.

Speed is important, but it can't tell the whole story. Stay tuned, as we surface new and practical comparisons that tell us more about the tools we love using.

banner image: "Evening View of Takanawa". Utagawa Hiroshige, 1835, Japan. Source